Assessing the 2014 Election

Updated index includes 2014 data

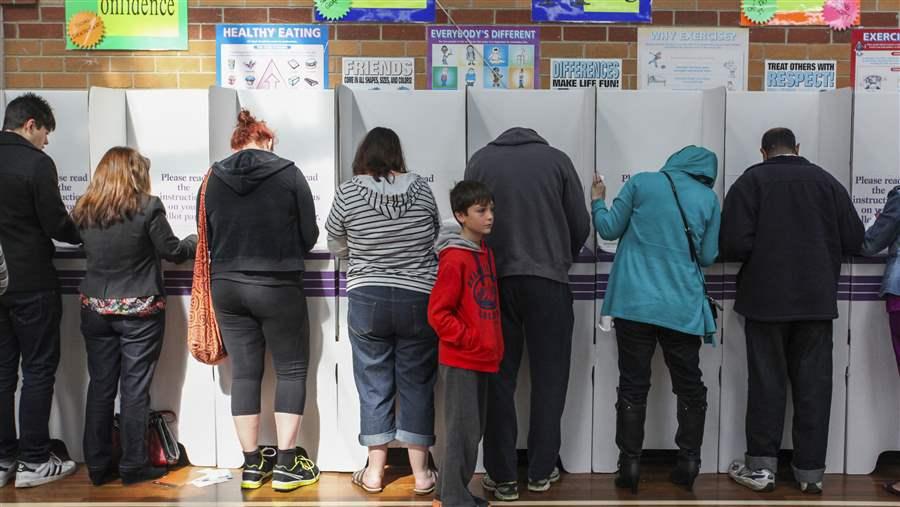

© The Pew Charitable Trusts

© The Pew Charitable TrustsOverview

In 2013, The Pew Charitable Trusts unveiled the Elections Performance Index (EPI), which provided the first comprehensive assessment of election administration in all 50 states and the District of Columbia. The interactive tool introduced the index’s performance indicators, summarized 2008 and 2010 data, and ranked the states according to their overall EPI averages, or scores, which give users a way to evaluate states’ election performance side by side and over time. The second edition, released the following year, added data from the 2012 election and offered users their first opportunity to compare a state’s performance across similar elections, specifically the 2008 and 2012 presidential contests. Now, the EPI has been updated to include data from 2014, the fourth election to be analyzed in the series, allowing users for the first time to compare data from two midterm elections: 2010 and 2014.

The data show that, overall, states continue to improve, performing better in 2014 than four years earlier. Although voters turned out at the lowest rate in a midterm election since 1942, fewer military and overseas ballots were rejected, and more online tools were available to help citizens find essential information, such as where to vote and who was on the ballot. Additionally, more states offered voters the option to register online.

Notably, eight states improved their rankings by 10 or more places compared with 2010: Illinois, Maryland, Nebraska, Ohio, South Carolina, Vermont, Virginia, and West Virginia.

Key findings of the EPI’s 2014 analysis:

- Elections performance improved overall. Nationally, the average of all indicators across all states rose 5.1 percentage points from 2010 to 2014. Forty states plus the District of Columbia improved their scores, while 10 states’ averages declined.

- High-performing states tended to remain high-performing and vice versa. Most of the highest-performing states in 2014—those in the top 25 percent—were also among the highest performers in 2010, and six were high performers in all elections measured in the index: 2008, 2010, 2012, and 2014. The lowest performers followed a similarly consistent pattern.

- Gains were seen in most indicators. Of the 15 indicators used in both 2010 and 2014, national performance improved on nine, including a decrease in the rate of military and overseas ballots rejected and an increase in the number of states providing online voter registration.1

The findings reveal steps that states can take to make elections more cost-effective and efficient. Among the most valuable are collecting more and better data, implementing online voter registration and upgrading registration systems, and using polling place management tools to allocate resources and reduce wait times, all strategies recommended by the bipartisan Presidential Commission on Election Administration.2

This brief examines the latest EPI findings and highlights trends that have emerged from the expanding analysis as well as best practices that states should consider as they work to improve their administration of elections.

What Is the EPI?

The Elections Performance Index is intended to help policymakers, election administrators, and others:

- Evaluate elections based on data, not anecdotal evidence.

- Compare elections performance across states and over time.

- Identify potential problem areas to be addressed.

- Measure the impact of changes in election policy or practice.

- Highlight trends that otherwise might not be identified.

- Use data to demonstrate to state and local policymakers where there is a need for additional financial, technological, and other resources.

- Educate voters about election administration by providing context about how the process works.

Pew partnered with the Massachusetts Institute of Technology in July 2010 to bring together an advisory group of state and local election officials and academics from the country’s top institutions to guide development of the index. The advisory group held a series of meetings to select the best ideas from indices in other public policy areas, identify and validate existing data sources, and determine the most useful ways to group available data.

The EPI tracks 17 distinct indicators of election performance, selected for their completeness, consistency, reliability, and validity.* For more information on how the indicators were selected and computed, or additional analysis of their meaning, please see the online interactive report at www.pewstates.org/epi-interactive or the report’s methodology at www.pewstates.org/epi-methodology.

The 17 indicators are:

- Data completeness. How many jurisdictions in the state reported statistics on the 18 core survey items in the U.S. Election Assistance Commission’s Election Administration and Voting Survey?

- Disability- or illness-related voting problems. What percentage of the state’s voters reported to the U.S. Census Bureau that they did not cast a ballot due to an “illness or disability (own or family’s)”?

- Mail ballots rejected. What percentage of mail ballots were not counted out of all ballots cast?

- Mail ballots unreturned. What percentage of mail ballots sent out by the state were not returned?

- Military and overseas ballots rejected. What percentage of military and overseas ballots returned by voters were not counted?

- Military and overseas ballots unreturned. What percentage of military and overseas ballots sent out by the state were not returned?

- Online registration availability. Were voters allowed to submit new registration applications online?

- Post-election audit required. Was a valid statewide audit of voting systems conducted to ensure the accuracy of the election results?

- Provisional ballots cast. What percentage of all voters had to cast a provisional ballot on Election Day?

- Provisional ballots rejected. What proportion of provisional ballots were not counted out of all ballots cast?

- Registration or mail-ballot problems. How many people reported to the Census Bureau that they did not cast a ballot because of “registration problems,” including not receiving a mail ballot or not being registered in the appropriate location?

- Registrations rejected. What proportion of submitted registration applications were rejected for any reason?

- Residual vote rate. What percentage of the ballots cast contained an undervote (i.e., no vote for a particular contest or contests) or an overvote (i.e., more than one candidate marked in a singlewinner race), indicating either voting machine malfunction or voter confusion?†

- Turnout rate. What percentage of the voting-eligible population cast ballots?

- Voter registration rate. What percentage of citizens reported to the Census Bureau that they were registered to vote?

- Voting information lookup tools. Did the state offer basic online tools, so voters could look up their registration status, find their polling place, get specific ballot information, track mail ballots, and check the status of provisional ballots?

- Voting wait time. How long, on average, did voters report waiting to cast their ballots?‡

* A state’s overall EPI average is calculated from its performance on the 16 indicators used in 2014, relative to all states. A state with an average of 100 percent would have the best value of any state on every indicator across both 2010 and 2014, and a state with an average of zero would have the worst value of any state on every indicator across both years. Because these averages are based on the performance of all states in those years, even a state with a 100 percent average has room for improvement in future elections.

† The residual vote rate indicator is not measured for midterm elections because adequate data are not available to create a metric comparable to the rate used for presidential elections.

‡ Data for wait times were not available in 2010 but were in 2014.

Overall elections performance improved

The addition of 2014 data to the Elections Performance Index makes it possible for the first time to compare a state’s performance over time and against other states in a midterm election. In general, state election administration improved between 2010 and 2014. This was true for the performance of individual states compared with prior years and nationwide on many indicators.

Nationally, states’ overall scores, which are calculated as an average of all indicators, increased 5.1 percentage points on average from 2010 to 2014.

States

Forty states and the District of Columbia improved their overall scores, compared with 2010:

- 24 states and the District raised their overall performance by more than the national average.

- 16 states improved but by less than the national average.

- 10 states had declines in their overall performance.

The 24 states (plus the District) that improved more than the national average vary widely in size and region; they cover the political spectrum from deep blue to battleground to solid red.

States at the top and the bottom of the rankings showed improvement. Maryland—a founding member of the Electronic Registration Information Center (ERIC) in 2012—improved its score by more than 10 percentage points from 2010 to 2014. The state, which was already a solid performer, ranked 19th in 2010 and rose to ninth in 2014, in part by adding online voter registration and reducing its rejected registrations rate. The single biggest jump was in Mississippi, which raised its score by 19 percentage points from 2010. The gains were primarily the result of better data collection and reporting. The improvement is a good sign for Mississippi, but the state still has much work to do: Its EPI average was still well below the national average and among the lowest in 2014.

Although the national trend was clearly upward, not all the news was good. Ten states’ overall scores declined: Arkansas, Kentucky, Louisiana, New Mexico, North Dakota, South Dakota, Tennessee, Texas, Utah, and Wyoming. Despite its decrease, North Dakota, which was the top-performing state in 2010, remained first overall.

High-performing states stay strong

The index continues to show that certain states consistently perform at a high level on elections, and others are repeatedly underperforming. The highest-performing states in 2014, those in the top 25 percent, were, in alphabetical order, Colorado, Connecticut, Delaware, Maryland, Michigan, Minnesota, Missouri, Montana, North Dakota, Oregon, Virginia, and Wisconsin. Nine of these—Colorado, Connecticut, Delaware, Michigan, Minnesota, Montana, North Dakota, Oregon, and Wisconsin—were also high performers in 2010; and six—Colorado, Delaware, Michigan, Minnesota, North Dakota, and Wisconsin—were high performers in 2008, 2010, 2012, and 2014.

Over time, better data and a clearer understanding of the characteristics of these two groups will help all states identify the problems that most commonly hinder improvement and recognize truly effective election administration and policy.

Registration Policies Continue to Improve Election Performance

North Dakota, Minnesota, and Wisconsin were among the top four states in each edition of the EPI. This consistently strong performance could be due, in part, to their voter registration policies. States that offer more convenient and efficient ways for voters to register and update their registrations minimize many common issues, such as rejected registrations, use of provisional ballots, and nonvoting due to registrations problems.

In the 2010 and 2014 midterms, as well as the two presidential elections analyzed in the EPI, seven of the 10 states with the lowest rates of registration difficulties or mail-ballot problems in 2014—Idaho, Maine, Minnesota, New Hampshire, North Dakota, Wisconsin, and Wyoming—allowed Election Day registration or did not require voter registration. Maine and Minnesota had the lowest rates of these problems, at approximately 1 percent.

Additionally, states that adopt online voter registration can increase the accuracy of their rolls while also reducing costs.* Twenty states and the District of Columbia allowed online voter registration in 2014—up from eight states in 2010—of which 10 were among the 15 highest-performing states overall. States using the latest technology to conduct data matching of voter registration lists, such as those participating in ERIC, have reduced the numbers of provisional ballots cast and rejected, as well as the proportion of wouldbe voters who fail to vote due to a registration problem.† Eleven states and the District were ERIC members in 2014, and eight of them were among the 15 highest-performing states in 2014.

The Presidential Commission on Election Administration recommends both online voter registration and participation in ERIC.‡

* The Pew Charitable Trusts, “Understanding Online Voter Registration” (January 2014), http://www.pewtrusts.org/~/media/assets/2014/01/28/ understanding_online_voter_registration.pdf?la=e.

† RTI International, Electronic Registration Information Center (ERIC): Stage 1 Evaluation, The Pew Charitable Trusts (Dec. 10, 2013), http://www.rti.org/pubs/eric_stage1report_pewfinal_12-3-13.pdf.

‡ See also The Pew Charitable Trusts, “Online Voter Registration Across the U.S.” (Oct. 20, 2015), http://www.pewtrusts.org/US-OVR; and Electronic Registration Information Center, ericstates.org.

Low performers still face challenges

Eleven states—Alabama, Arkansas, California, Hawaii, Idaho, Kentucky, Mississippi, New York, Oklahoma, Texas, and Wyoming—and the District of Columbia ranked in the lowest 25 percent on the index in 2014. Eight of these—Alabama, California, the District, Hawaii, Idaho, Mississippi, New York, and Oklahoma—also ranked in the bottom quartile in 2010.

Importantly, because overall averages reflect the performance of other states, even dramatic improvement or decline within a state may not be reflected in its ranking relative to other states. As noted earlier, this is evident in the case of Mississippi but also of New York, which had a 17 percentage point increase in its score; California, which had a 9 point increase; and Idaho and Oklahoma, which each had 7 point increases. Even with their improvements, these states still fell into the group of low performers because widespread improvement elsewhere raised the national average significantly. This highlights the value of considering multiple points of comparison, made possible by the index: evaluating states against the national average, evaluating state against state, and evaluating a state over time.

Indicators

Individual indicators reveal critical information about what is improving a state’s overall performance as well as what consistently holds a state back.

The nationwide view

Nationally in 2010 and 2014, scores on nine of the 15 indicators improved, with notable gains in seven areas:3

- 26 states and the District of Columbia reported 100 percent complete data to the Election Assistance Commission, up from 11 states and the District in 2010.

- For the 2014 general election, online voter registration was offered in 20 states and the District, compared with 13 states in 2012 and eight in 2010.4

- 14 states offered voters all five Pew-recommended online lookup tools in 2014, up from 10 in 2010. For the first time, all states offered at least one online tool. California and Vermont, the last two states to add a tool, used Pew’s Voting Information Lookup Tool as a polling place locator: https://www.votinginfoproject.org/projects/vip-voting-information-tool.

- The proportion of military and overseas ballots that were not returned fell from 53.1 percent in 2010 to 43.3 percent in 2014; nonreturn rates declined in 30 states and the District, and of those, 19 and the District improved by 10 or more percentage points.

- The percentage of military and overseas ballots rejected decreased by almost 2 points nationally, from 6.6 percent in 2010 to 4.8 percent in 2014, with rates declining in 26 states.

- The rate of nonvoting due to registration or mail ballot issues declined by slightly more than half a percentage point; rates declined in 44 states and the District.

- The rate of nonvoting due to illness declined nationally by slightly more than 1 percentage point, from 12.9 percent in 2010 to 11.8 percent in 2014; rates fell in 43 states and the District.

Nationwide, averages for six indicators decreased in 2014, although most of the declines were marginal.5 Most notably, voter participation was at its lowest rate since World War II: Turnout decreased approximately 5 points, from 41.8 percent in 2010 to 36.7 percent in 2014.6

Additionally, unreturned mail ballots increased by more than 2 percentage points, from 12.9 percent in 2010 to 15.5 percent in 2014. Thirty-three states had higher unreturned rates in 2014 than in 2010, including nine that had increases in excess of 10 percentage points.

Performance varied by region

At least four indicators varied substantially by region:7

- Mail ballots rejected. The average rate of mail ballots rejected in 2010 and 2014 in the West was 0.4 percent, significantly greater than those in the Midwest and Northeast (both 0.2 percent) and the South (0.1 percent).8 In 2014, the five states with the highest mail-ballot rejection rates were in the West. This is not necessarily surprising given that three of the states—Colorado, Oregon, and Washington—hold elections entirely by mail, and the other two—Arizona and California—allow voters to enroll in permanent mail voting. Together, these policies raise the number of mail ballots sent to voters, which in turn increase the rates at which those ballots are not returned.

- Nonvoting due to disability or illness-related problems. The average rate for this indicator across both years was 14.7 percent in the South and 13.0 percent in the Northeast, significantly greater than the rates in the Midwest (10.9 percent) and West (10.0 percent).9 In 2010 and 2014, 13 of the 15 highest rates for this indicator were in the Northeast or South, while, not surprisingly, the states that hold all-mail elections had the lowest rates in both years.

- Average wait times. The average wait to vote in the South in 2014 was 5.6 minutes, more than two minutes longer than the 3.2 minutes in the Midwest and the Northeast, and the West’s 2.6 minutes.10 Nine of the 10 states with the longest waits were in the South.

- Turnout. Average turnout across 2010 and 2014 was lower in the South (37.8 percent) than in the West (43.2 percent), Northeast (43.4 percent), or Midwest (44.5 percent).11

Data completeness

Nationally, the completeness of data that states reported on the Election Administration and Voting Survey (EAVS) has improved over the past four years. In 2014, 26 states and the District reported 100 percent complete data on the portions of the survey that are used for the EPI. This is a marked improvement from the 11 states (plus the District) that did so on the 2010 EAVS. The more complete the data collected by states, the better they can understand which aspects of election administration are working well and which need improvement.

Seven states improved their data completeness by more than 10 percentage points, and a number of these states had large increases in their EPI scores and ranks. New York went from 67 percent complete data in 2010 to 91 percent in 2014, and its overall score rose 17 percentage points, well above the national average increase. West Virginia collected 100 percent of its data in 2014, up from 81 percent in 2010, and its overall score increased nearly 13 percentage points.

Data Quality

In addition to compiling more complete data, state officials who improve the accuracy of the data they collect will be better able to understand how effectively their offices are functioning, which can spur innovation, increase efficiency, and ultimately lead to a better experience for voters. After comparing responses to the 2010 and 2014 EAVS, some states were able to uncover errors in their 2010 data, which led to updated rankings for that year:

- In Virginia, officials improved the process used to generate responses to the EAVS, which helped capture more accurate data about military and overseas voters and rejected registrations. As a result, the state was able to compile and report fully on its rate of military and overseas ballots rejected in 2010, which it initially had been unable to do. The improved process helped the state jump from 23rd to 14th in the 2010 EPI.

- Louisiana discovered errors in its data on rejected registrations and absentee ballots, which, when corrected, dropped the state from 14th to 23rd in the 2010 rankings.

- Utah found problems with its process for generating data points for the 2014 EAVS. These issues made it impossible to score the state on several EPI indicators and were partly responsible for the decline in the state’s ranking from 18th in 2010 to 36th in 2014.

Wait times

The average reported wait time nationally was 3.8 minutes and the longest was 8.8 minutes in North Carolina. As expected with the much lower turnout that is typical of midterm elections, the reported wait times for 2014 were shorter than those in 2008 (14.4 minutes) and 2012 (11.9 minutes). The 2014 EPI is the first to include wait times to vote for a midterm election, so no points of comparison are available from the 2010 index.12

The states with the shortest waits—all less than two minutes—were Colorado, Idaho, Iowa, New Jersey, Oregon, Pennsylvania, South Dakota, Vermont, and Washington.

The states with the shortest waits—all less than two minutes— were Colorado, Idaho, Iowa, New Jersey, Oregon, Pennsylvania, South Dakota, Vermont, and Washington.

Recommendations

The results of the 2014 EPI suggest two key recommendations for states to continue upgrading their election administration:

Improve election data management and reporting

The quality of state elections data has steadily improved since the first edition of the EPI, but many states still have room for improvement. A 2015 summit on elections data, hosted by the Election Assistance Commission, revealed persistent challenges to accurate data reporting.13

The difficulties often begin at the local level, where election administrators need better tools to maintain consistent records throughout the election cycle. Officials also need common definitions, so that, for instance, every jurisdiction in the state understands and agrees on what “rejected registration” means and records this measure consistently. State and local governments have also reported needing to maintain a second set of records to manage certain critical data points that their voter registration or election management systems could not record, a practice that increases the risk of error and is an inefficient use of limited resources.

Finally, administrators need to be thoughtful when developing the questions and creating processes they use to report data to the EAVS and other repositories. Queries should be checked for accuracy at regular intervals because data systems change over time.

Use polling place management tools to allocate resources and reduce wait times

Even states with low average wait times often have significant variation in the length of lines across jurisdictions and polling locations. One of the key missions of the Presidential Commission on Election Administration was to investigate the causes of long lines at polling places to help states and localities reduce the waits for voters.14 In response, researchers across the country produced several models to help election administrators predict the lengths of lines at the polls throughout the day and make decisions about the number of polling places and voting stations needed for each precinct. These tools are available to states free of charge through the commission’s website and can be customized with local data.15 Election administrators should take advantage of these tools to guide resource allocation and minimize lines on Election Day.

Conclusion

The Elections Performance Index provides an opportunity for policymakers, election administrators, and the public to see how states performed across elections and to evaluate changes or trends. The new edition adds data from the 2014 election, allowing users to compare midterm voting for the first time. Future iterations will offer still more opportunities to evaluate the state of U.S. elections over an extended time frame.

As data improve, the index will provide additional and more nuanced metrics to assess state performance. When states change their policies or administration, the index will be able to track the effect of those actions; as more is known about how elections run and how best to measure them, the indicators will be expanded and refined to better reflect the characteristics of effective, efficient election administration.

Endnotes

- The residual vote rate indicator is not measured for midterm elections because adequate data are not available to create a metric comparable to the rate used in presidential election years.

- Presidential Commission on Election Administration, The American Voting Experience: Report and Recommendations of the Presidential Commission on Election Administration (January 2014), https://www.supportthevoter.gov/files/2014/01/Amer-Voting-Exper-final-draft-01-09-14-508.pdf.

- Data for wait times were not available in 2010 but were in 2014. The rate at which registrations were rejected decreased from 9.3 to 4.1 percent, but the drop was driven mostly by large declines in a couple of states. Additionally, the number of states requiring a post-election audit increased from 29 to 33.

- As of August 2016, 31 states and the District of Columbia will allow online voter registration.

- The percentage registered decreased from 79.1 to 78.6 percent, provisional ballots cast increased from 0.95 to 1.0 percent, provisional ballots rejected increased from 0.16 to 0.17 percent, and mail ballots rejected remained at 0.23 percent.

- United States Elections Project, “National Turnout Rates, 1787-2012,” http://www.electproject.org/home/voter-turnout/voter-turnout-data.

- The index uses the U.S. Census Bureau regional designations of Northeast, Midwest, South, and West. The Northeast includes Connecticut, Maine, Massachusetts, New Hampshire, New Jersey, New York, Pennsylvania, Rhode Island, and Vermont. The Midwest is made up of Illinois, Indiana, Iowa, Kansas, Michigan, Minnesota, Missouri, Nebraska, North Dakota, Ohio, South Dakota, and Wisconsin. The South includes Alabama, Arkansas, Delaware, the District of Columbia, Florida, Georgia, Kentucky, Louisiana, Maryland, Mississippi, North Carolina, Oklahoma, South Carolina, Tennessee, Texas, Virginia, and West Virginia. The West is composed of Alaska, Arizona, California, Colorado, Hawaii, Idaho, Montana, Nevada, New Mexico, Oregon, Utah, Washington, and Wyoming.

- The difference in rate of mail ballots rejected in the West compared with the three other regions is statistically significant at p < 0.01.

- Significant at p < 0.05.

- Significant at p < 0.01.

- Significant at p < 0.05.

- In 2008 and 2012, average-wait-time-to-vote data were drawn from the Caltech/MIT Voting Technology Project’s Survey of the Performance of American Elections (SPAE). For the purpose of calculating overall EPI averages, the measure of voting wait times in Oregon and Washington—the only all-vote-by-mail states at the time—were set to missing and did not contribute to these states’ overall scores. In 2014, the SPAE included a question asking mail voters how long they had to wait to deposit their ballots at the drop-off site. The responses to this question were averaged with the wait times experienced by voters who cast their ballots at polling places.

- U.S. Election Assistance Commission’s Election Data Summit—How Good Data Can Help Elections Run Better (Aug. 12–13, 2015), http://www.eac.gov/eac_election_data_summit_—_how_good_data_can_help_elections_run_better.

- Presidential Commission on Election Administration, The American Voting Experience.

- Presidential Commission on Election Administration, “Election Toolkit,” https://www.supportthevoter.gov.